.webp?width=2917&height=1250&name=computer%20eve%20vision%20(1).webp)

Vision AI (also known as Computer Vision) is a field of computer science that trains computers to replicate the human vision system. This enables digital devices (like face detectors, QR Code Scanners) to identify and process objects in images and videos, just like humans do.

Personalized image search on eCommerce stores, 3D model building (Photogrammetry), aeriel images on a map, OCR scanning in retail outlets, face recognition, image detectors, MRI reconstruction are some of the innovative use cases of computer vision that we have today.

But, when was this technology introduced? How it evolved? What future possibilities does it bring for businesses, irrespective of the industry? The upcoming segment discusses these three factors and gives a brief about how vision AI works. So, let’s get started.

The History and Evolution of Computer Vision

The first experiment for computer vision took place in the 1950s wherein neural networks were used to detect edges of an object and sort simple objects like squares and circles. Later in the 1970s, a commercial use case of computer vision was implemented. It was an interpretation of a handwritten text using optical character recognition (OCR). This execution was used to interpret written text for the blind. In 1999s, as the internet matured, facial recognition programs thrived. Later, in 2010 (and beyond), deep learning helped computers to train themselves and self-improve with time.

Today, this technology has found its use cases in various domains, right from automotive, healthcare, retail, smartphones, and more. And what’s contributing to the progress of Vision AI technology is affordable computing power, better hardware, new algorithms like convolutional neural networks, etc. As a result, there is more accuracy in output and improved use cases of the technology.

Owing to its potential, the computer vision AI market is expected to have a worth of 1.6 billion USD by the end of 2019. | Source: Statista

Computer Vision AI: How it Works?

Consider Computer Vision AI like a jigsaw puzzle. There are several pieces that you have to assemble to create an image. That’s exactly how the neural networks for computer vision work. The neural networks distinguish between different pieces of an image and identify the model of subcomponents.

Instead of giving hints to recognize an object, the computer is fed with images that can help in the precise identification of an object. Suppose, you have to train a computer to identify a cat. Instead of giving hints like different tails, whiskers, pointy ears, the system is fed with hundreds (or millions) of images of cats. The model thus created learns about different features that make up a cat or differentiates it from other look-alike animals.

Applications of Computer Vision AI :

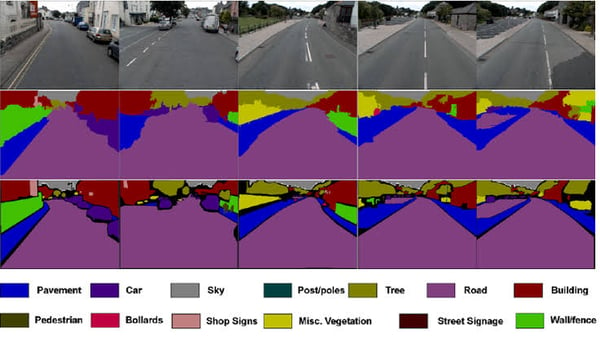

1. Image Segmentation

Its a process of partitioning an image from multiple regions and pieces, based on pixel characteristics in an image. Generally used for examining purposes, image segmentation involves separating foreground from background or clustering parts of an image by pixels, based on similarity in color or shape. The image shown below exemplifies image segmentation, where parts of the image are differentiated with colors.

Image Courtesy: Research Gate

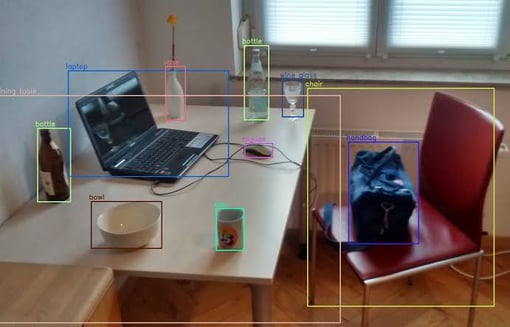

2. Object Detection

This field of computer vision AI deals with the detection of one or multiple objects in an image or a video. For example, surveillance cameras smartly detect humans and their activities (no movement, objects like guns or knife, etc.) so that caution is passed for suspicious activities.

Image Courtesy: Research Gate

3. Facial Recognition

The facial recognition technique aims at detecting an object or human face in the image. It is one of the complex applications of computer vision because of variability in human faces- expression, pose, skin color, the difference in camera quality, position or orientation, image resolution, etc. However, this technique is prominently used. Smartphones use it for user authentication. Facebook uses the same technique when it gives tagging suggestions for people in a picture.

Image Courtesy: Apple

4. Edge Detection

Edge detection deals with finding the boundaries of objects within an image. This is done by detecting discontinuities in brightness. Edge detection can be a great help in data extraction and image segmentation.

Image Courtesy: Wikipedia

5. Pattern Recognition

Pattern recognition is the ability of a system to detect arrangements of characteristics or data. Here, a pattern can be a recurring sequence of data or a set of data added to the system.

Image Courtesy: Wikipedia

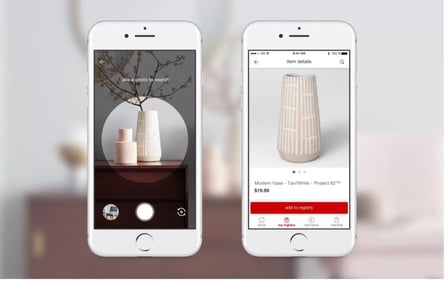

6. Visual search

Visual search simplifies the way users search for products or information online. Instead of relying on textual queries, individuals can now use images as search inputs and upload it to the platform.

For instance, you find a vase you like. Instead of describing them, you snap a picture and upload it. The underlying technology analyzes its visual characteristics, such as colors, shapes, and patterns. This analysis is then compared against a vast database of images. The system identifies similarities and presents the user with relevant matches.

Image Courtesy: Impossible marketing

For ecommerce businesses, it can help enhance customer engagement and increase sales as users can effortlessly find products resembling their preferences. For consumers, it translates to a more intuitive and convenient online shopping experience.

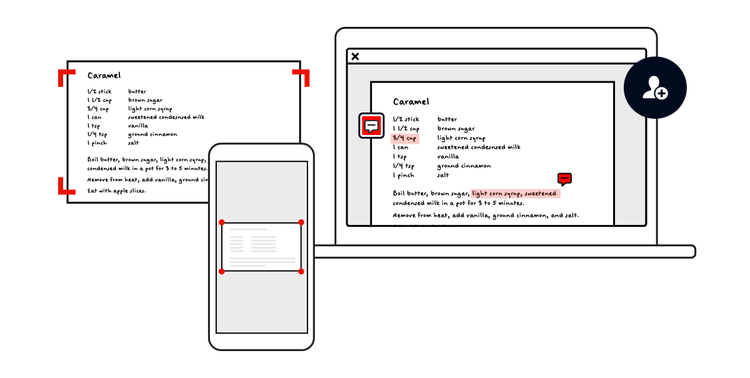

7. Optical Character Recognition (OCR)

OCR is a technology designed to convert different types of documents, including scanned paper documents and images, into editable and searchable data. It works by recognizing the shapes and patterns of characters in an image and translating them into text that computers can process.

For example, consider a scenario where you have a paper document containing vital information. By using OCR, you can scan the document, and the technology will extract the text, allowing you to edit or search for specific information within the document.

Image Courtesy: Adobe

OCR technology finds widespread use in digitizing physical documents, automating data entry tasks, enabling translation services, creating accessible documents for visually impaired individuals, and enhancing mobile applications for tasks like scanning receipts or business cards. Its ability to transform printed or handwritten text into digital format greatly simplifies various tasks, making information more accessible and usable in the digital age. Now, OCR has been integrated into numerous online tools such as Image to text converter. This ensures everyone can benefit from this useful technology.

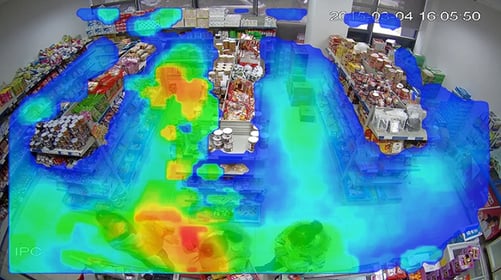

7. Customer Heatmap

Customer heatmap analysis is used to observe and analyze customer behavior within physical spaces. By strategically placing cameras equipped with computer vision capabilities, businesses can capture real-time footage of customer movements. These captured video streams are then processed by advanced algorithms, which track and interpret customer interactions.

The output of this analysis is represented visually through heatmaps. These heatmaps offer a color-coded depiction of customer engagement levels, using warm colors to highlight areas where customers frequently dwell or engage with products and displays.

Image Courtesy: LinkedIn

For instance, in a retail store, a heatmap might reveal that a particular clothing rack consistently attracts customer attention, while in a supermarket, it could identify popular sections or aisles.

These heatmaps provide businesses with actionable insights. By understanding customer behavior patterns, businesses can optimize their physical layouts. They can strategically place high-demand products, improve staff allocation for enhanced customer service, and design marketing strategies tailored to specific customer preferences.

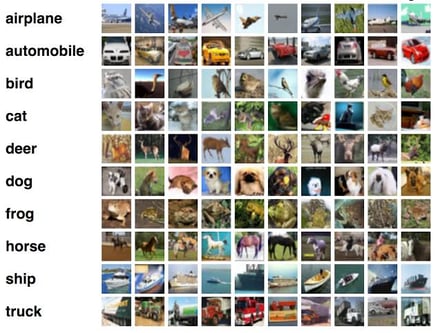

6. Image classification

Image classification involves classifying an image based on the contextual visual content present in it. The process includes focussing on the relationship of nearby pixels. The classification system comprises a database that contains predefined patterns. These patterns are compared with the detected object to classify what it is. Image classification has significant applications in areas such as vehicle navigation, biometry, video surveillance, biomedical imaging, etc.

The Present and Future of Computer Vision

Computer Vision brings endless possibilities for consumers and businesses. Self-driving cars, medical diagnosis, image labeling, cashier-less checkout are some of the benefits of computer vision technology that exemplify its limitless benefits for different industries.

However, one of the major challenges in implementing computer vision at a larger scale is big data that are needed to be trained. With better resources available to train models, computer vision holds the capability to bypass the human ability to recognize, classify, and detect diverse images/videos.