From natural language processing to computer vision and beyond, there is a wide range of AI technologies that are being used to transform businesses across various industries. One of the latest development in this space is ChatGPT, which has recently made significant strides in advancing AI capabilities. With its recent updates i.e., GPT-4, enterprises are able to unlock new possibilities and stay ahead of the curve in an ever-changing business landscape.

Nonetheless, the driving force behind this cutting-edge technology is Multimodal AI.

By harnessing the power of Multimodal AI, enterprises can automate processes, improve decision-making, and deliver more personalized experiences to customers. It has tremendous potential for use in industries such as healthcare, finance, and retail, where accurate and tailored responses are crucial.

So let’s figure out what exactly is Multimodal AI, how it works, and its advantages and its real-world applications.

What is Multimodal AI?

Multimodal AI is an advanced form of artificial intelligence that is able to analyze and interpret multiple modes of data simultaneously, allowing it to generate more accurate and human-like responses. Unlike traditional AI systems that typically rely on a single source of data, multimodal AI can combine information from different sources, such as images, sound, and text, to create a more nuanced understanding of a given situation.

This makes it a highly versatile technology that can adapt to various situations and contexts. With its ability to recognize and interpret different forms of data, multimodal AI can simulate human perception and understanding, paving the way for more intuitive and natural human-machine interaction.

How does Multimodal AI work?

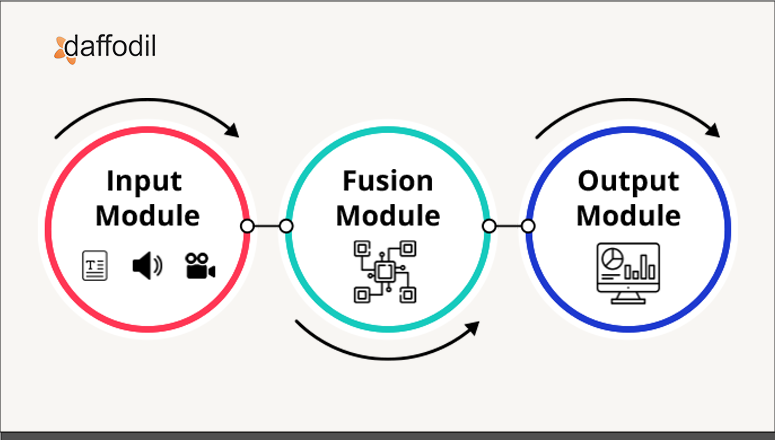

Multimodal AI architecture typically consists of several key components, including the input module, fusion module, and output module.

The input module consists of unimodal neural networks which receive and preprocess different types of data separately. This module may use different techniques, such as natural language processing or computer vision, depending on the specific modality. For example, when processing text data, the input module may use techniques such as tokenization, stemming, and part-of-speech tagging to extract meaningful information from the text.

After extraction, the fusion module comes into the scene for integrating information from multiple modalities, such as text, images, audio, and video. It can take many forms, from simple operations like concatenation to more complex approaches such as attention mechanisms, graph convolutional networks, or transformer models.

The goal of the fusion module is to capture the relevant information from each modality and combine it in a way that leverages the strengths of each modality.

An output module is responsible for generating the final output or prediction based on the information processed and fused by the earlier stages of the architecture.

Last but not least, the output module, it takes the fused information as input and generates a final output or prediction in a form that is meaningful to the task at hand. For example, if the task is to classify an image based on its content and a textual description, the output module might generate a label or a ranking of labels corresponding to the most likely classes that the image belongs to.

Nevertheless, the purpose is to generate an actionable output based on the input from multiple modalities, thus enabling the system to perform complex tasks that were not possible to achieve with a single modality.

Advantages of Multimodal AI

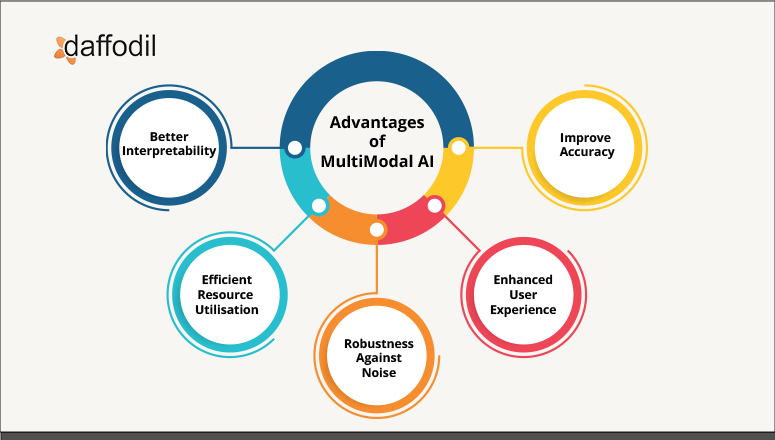

There are several advantages of multimodal AI, which include:

1. Improved accuracy: By leveraging information from multiple modalities, multimodal AI can achieve higher accuracy and robustness compared to unimodal AI. For example, in a system that analyzes customer feedback for a product, combining text, image, and audio modalities can provide a more comprehensive understanding of customer sentiment.

2. Enhanced user experience: Multimodal AI can enhance the user experience by providing multiple ways for users to interact with the system. For example, in a virtual assistant system, users can interact with the system using voice, text, or gesture, providing greater convenience and accessibility.

3. Robustness against noise: By combining information from multiple modalities, multimodal AI can be more robust against noise and variability in the input data. For example, in a speech recognition system that combines audio and visual information, the system can continue to recognize speech even if the audio signal is degraded or the speaker's mouth is partially obscured.

4. Efficient usage of resources: Multimodal AI can help to make more efficient use of computational and data resources by enabling the system to focus on the most relevant information from each modality. For example, in a system that assesses social media posts for sentiment analysis, combining text, images, and metadata can help reduce the amount of irrelevant data that needs to be processed.

5. Better interpretability: Multimodal AI can help to improve interpretability by providing multiple sources of information that can be used to explain the system's output. For example, in a system that analyzes medical images for the diagnosis, combining images with textual descriptions and other modalities can help to explain the reasoning behind the system's diagnosis and provide more transparency and accountability.

ALSO READ: Top 17 Industry Applications of ChatGPT

Applications of Multimodal AI across Various Industries

By harnessing the power of multiple data modalities, businesses can improve accuracy, efficiency, and effectiveness across a range of operations, resulting in better outcomes and increased competitiveness. Here are some real-world use cases of multimodal AI:

1. Healthcare - Multimodal AI can help improve medical imaging analysis, disease diagnosis, and personalized treatment planning. By combining medical images with patient records and genetic data, healthcare providers can gain a more accurate understanding of a patient's health, enabling them to tailor treatment plans to individual patients. This can result in better patient outcomes and improved efficiency in healthcare operations.

2. Retail - In retail, it can be used to enhance customer experience and increase sales. By utilizing user behavior data, product images, and customer reviews, retailers can provide personalized recommendations and optimize product searches. This can lead to increased customer satisfaction and loyalty.

3. Agriculture - Multimodal AI can help monitor crop health, predict yields, and optimize farming practices. By integrating satellite imagery, weather data, and soil sensor data, farmers can gain a richer understanding of crop health and optimize irrigation and fertilizer application, resulting in improved crop yields and reduced costs.

4. Manufacturing- This multimodal AI can be leveraged to improve quality control, predictive maintenance, and supply chain optimization. By incorporating audiovisual data, manufacturers can identify defects in products and optimize manufacturing processes, leading to improved efficiency and reduced waste.

5. Entertainment- By analyzing different modalities, multimodal AI algorithms can be used to extract features about emotions, speech patterns, facial expressions, and actions. This can help content creators to better understand their audience and tailor their content to specific demographics. For example, a movie or TV show could be analyzed to determine which scenes were most emotionally impactful to viewers, which characters were most relatable, and which types of humor were most effective. This can ultimately help create more engaging content and increase customer satisfaction.

According to a report, the healthcare industry is expected to be the largest user of multimodal AI technology, with a CAGR of 40.5% from 2020 to 2027.

Breaking Down the Barriers with Multimodal AI

From healthcare to finance and entertainment to agriculture, the benefits of multimodal AI are diverse and far-reaching. By combining the strengths of different modalities, businesses can unlock insights that were previously hidden, enabling them to make better decisions and improve outcomes.

As technology continues to evolve and become more advanced, we can expect even greater innovation and impact from multimodal AI in the years ahead.

Ready to take your AI strategy to the next level? Check out our AI services and book a free consultation with our experts.