A technical implementation guide for designing, building, and deploying AI in healthcare without compromising patient privacy or regulatory compliance.

Healthcare and artificial intelligence have arrived at a defining intersection. Diagnostic algorithms now detect tumors earlier than the human eye. Predictive models flag sepsis hours before vitals deteriorate. Chatbots triage millions of patients daily. Yet beneath every one of these advances lies an uncomfortable truth: AI thrives on data, and in healthcare, that data belongs to patients.

HIPAA, the Health Insurance Portability and Accountability Act, does not care that your model accuracy is impressive or that your engineers are brilliant. It cares whether patient data is handled with rigor, transparency, and accountability. Get it wrong, and the consequences span regulatory fines into the millions, class-action exposure, and something harder to recover: the erosion of patient trust.

This guide is written for engineers, architects, and clinical informatics teams who are building or deploying AI systems in healthcare environments. We will move from regulatory foundations through architecture, de-identification, pipeline security, LLM risks, and operational compliance, with enough technical depth to be immediately useful.

HIPAA compliance cannot be an afterthought. It must be engineered into the system from the first line of architecture.

Why HIPAA Compliance Matters in AI-Driven Healthcare

The modern AI development cycle helps collect vast data, train expressive models, and deploy at scale. It runs directly counter to HIPAA's foundational philosophy: collect minimum necessary data, restrict access strictly, and protect every disclosure. Understanding this tension is the first step to resolving it architecturally.

Healthcare organizations that fail to account for HIPAA in their AI programs risk serious penalties. Civil violations carry fines from $100 to $50,000 per violation, capped at $1.9 million per category per year. Willful neglect can trigger criminal prosecution. But beyond fines, a breach that exposes patient records can end a health system's AI program entirely, and damage its clinical reputation for years.

The organizations winning in healthcare AI are those that treat compliance as a design constraint, not a legal review step after the fact. Privacy-by-design architecture means every component of the data pipeline, every model artifact, and every inference endpoint is built with PHI handling as a first-class concern.

Also read: Healthcare App Development: Costs, Features And Planning

Understanding HIPAA Through an AI Lens

HIPAA is not a single monolithic rule. It is a set of interlocking regulations that together govern the creation, use, storage, and disclosure of protected health information. Here is how each component maps to AI system design.

1. The Privacy Rule

The Privacy Rule defines Protected Health Information (PHI), any information that can identify a patient and relates to their health condition, healthcare services, or payment. For AI systems, this means that any dataset containing names, dates, geographic identifiers below state level, phone numbers, email addresses, biometric identifiers, or any of the 18 HIPAA identifiers must be treated as PHI from ingestion to inference.

The minimum necessary standard is critically important for AI teams. Your model does not need raw, identifiable records if a de-identified or synthetic dataset can accomplish the same training objective. Default to the least invasive data representation that serves the clinical purpose.

2. The Security Rule

The Security Rule mandates technical safeguards for electronic PHI (ePHI). For AI systems, this translates directly to encryption requirements, access controls, audit logs, and transmission security across every layer of the data pipeline, from data lake to training cluster to inference endpoint.

3. The Breach Notification Rule

When a breach involving unsecured PHI occurs, covered entities must notify affected individuals within 60 days. Breaches affecting 500+ individuals in a state must also notify the media. For AI systems, this means breach detection and incident response plans must be built into your MLOps and monitoring infrastructure, not bolted on as a manual process.

4. Business Associate Agreements (BAA)

If your AI system involves any third-party vendor, a cloud provider, an ML platform, an annotation service, and that vendor will access PHI, a signed BAA is legally required before any PHI is shared. This applies to cloud-hosted GPU clusters, managed ML platforms, and any API endpoint that may receive patient data. Always verify BAA coverage before onboarding an AI vendor into a PHI-adjacent workflow.

Also read: Regulations and Compliance in Healthcare Application Development

Where AI Systems Interact with PHI

Understanding where PHI enters, flows through, and exits your AI system is the foundation of compliant architecture. The following table maps common healthcare AI use cases to their PHI exposure points.

Also read: Developing An AI-Driven Patient Intake Platform For Rare Disease Care

In practice, PHI rarely stays within a single component. It flows: from an EHR system into an ingestion pipeline, into a data lake, into a training dataset, into a model artifact, and finally into an inference endpoint serving a clinical application. Every handoff between these stages is a potential compliance gap.

Designing a HIPAA-Compliant AI Architecture

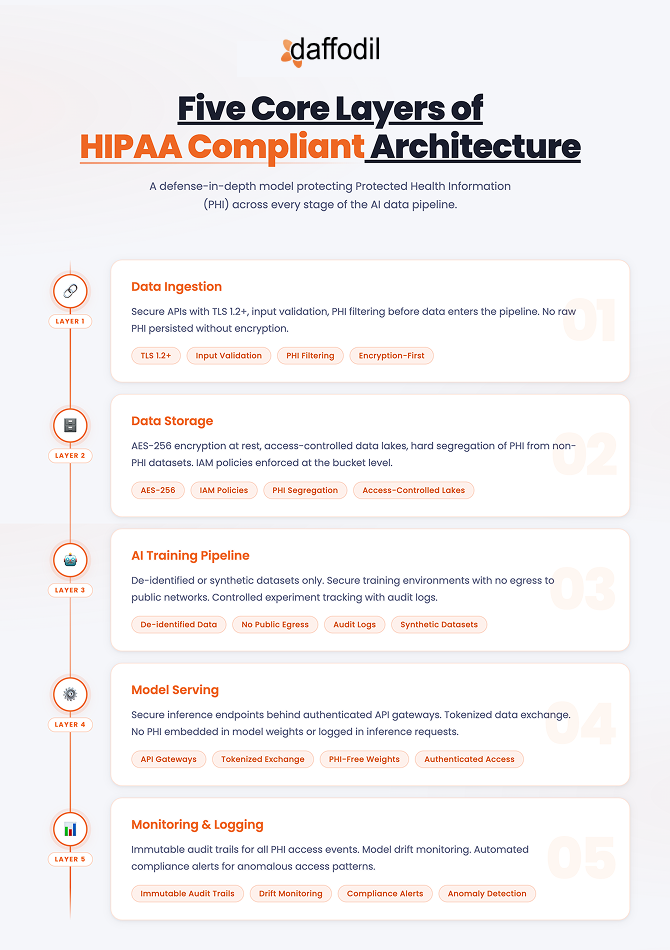

A compliant AI architecture is not a single technology choice; it is a layered system where each layer enforces its own set of controls. The five core layers are: data ingestion, data storage, training pipeline, model serving, and monitoring.

A key design principle: treat the boundary between PHI and de-identified data as a hard zone boundary, not a logical boundary managed by convention, but an infrastructure boundary enforced by network controls, IAM policies, and automated scanning.

Success Story: Developing A Custom Practice Management System (PMS) For A US-Based Telehealth Provider

De-Identification & Data Minimization Strategies

De-identification is the most powerful tool in the HIPAA-compliant AI toolkit. A properly de-identified dataset is not subject to the Privacy Rule; it can be used for model training, shared across teams, and in some cases even published, without triggering HIPAA obligations.

De-Identification Methods

A properly de-identified dataset is not subject to the Privacy Rule, it can be used for training, shared across teams, and, in some cases, published without triggering HIPAA obligations. HIPAA approves two methods: the Safe Harbor Method (remove all 18 specified identifiers) and Expert Determination (a qualified statistician certifies that re-identification risk is very small). Beyond these, differential privacy, tokenization, and synthetic data generation have all matured into practical production tools.

Encryption & Least-Privilege Access

PHI at rest requires AES-256; PHI in transit requires TLS 1.2+. Every service account and training job should operate under granular least-privilege IAM roles. Store encryption keys in hardware security modules or managed key vaults, never in application code or environment variables.

Advanced Approaches

Federated learning allows models to train across multiple hospital management systems without patient data ever leaving the originating institution. Confidential computing (Intel TDX, AMD SEV) provides hardware-level isolation during computation, even the cloud provider cannot access data being processed inside a secure enclave.

HIPAA Considerations When Using LLMs

LLMs have rapidly entered clinical workflows, summarizing discharge notes, drafting prior authorizations, and powering patient chat. They also represent one of the most significant new HIPAA risks in healthcare, because they may retain, memorize, or inadvertently reproduce training data.

Critical: Sending PHI to a public LLM API without a signed BAA and HIPAA-eligible configuration is a potential HIPAA violation, regardless of how the prompt is structured. The transmission itself is the violation.

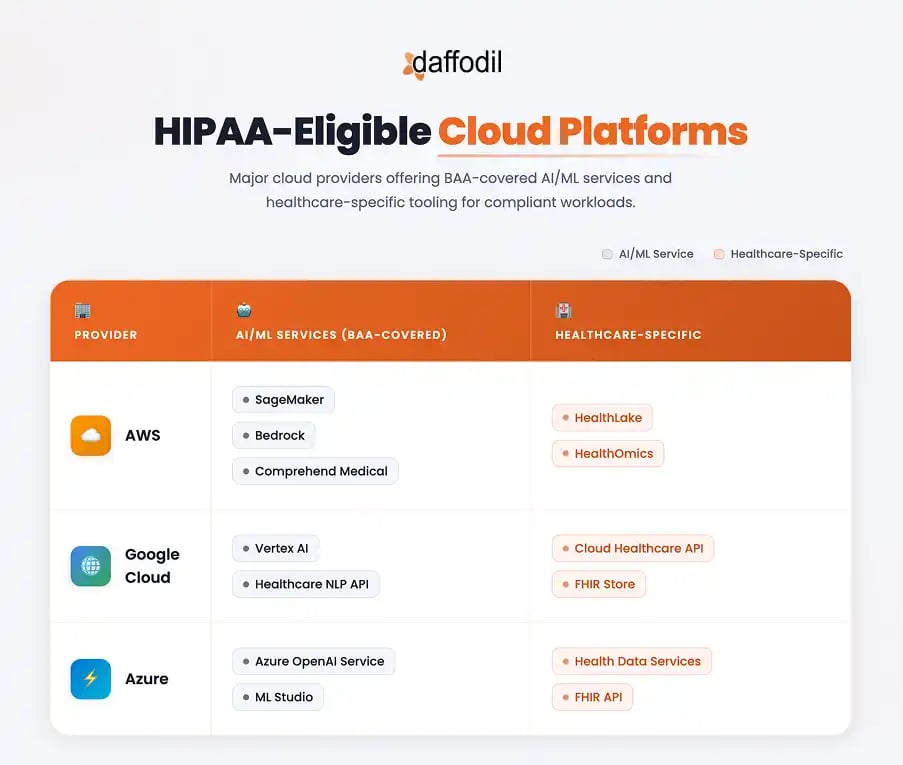

Preferred: HIPAA-eligible cloud AI: Azure OpenAI, AWS Bedrock, or Google Vertex AI with signed BAAs and data isolation guarantees.

Preferred: Private model hosting: Open-weight models (Llama, Mistral, BioMedLM) deployed in your own HIPAA-compliant VPC. Full data control, no third-party dependency.

Avoid: Public APIs without BAA: Consumer-facing endpoints, free-tier access. Never route patient data through these, regardless of perceived anonymization.

Even with a HIPAA-eligible provider, implement a PHI redaction layer between clinical data and the LLM prompt. Use NER models (scispaCy, AWS Comprehend Medical) to detect and strip identifiers before prompt construction, and monitor outputs for PHI leakage using automated pattern scanning.

Explainable AI and Compliance Governance

Explainability as a Compliance Requirement

A model that recommends against a sepsis alert or flags a scan as benign must be interrogatable by clinicians, compliance officers, and regulators. SHAP, LIME, and imaging saliency maps surface, which features or regions drove a prediction; these outputs should be persisted alongside predictions as compliance artifacts. Bias detection is equally non-negotiable: a model that performs worse for patients of a particular race, sex, or age may violate Section 1557 of the Affordable Care Act in addition to causing clinical harm.

Operational Governance Checklist

-

Maintain a live model registry documenting every AI system in production with its clinical use case and validation status

-

Quarterly access audits, revoke permissions for departed staff or changed roles immediately

-

Annual HIPAA training for all staff with access to PHI-adjacent AI systems

-

Tabletop incident response exercises twice yearly, simulating AI-related breach scenarios

-

Clinical AI governance committee with representation from clinical leadership, IT security, and legal

Compliant ML Operations and Cloud Infrastructure

1. Secure MLOps Practices

Every model artifact in production must be registered with full provenance metadata: dataset version, training run ID, evaluation results, and clinical approval record. Rollback mechanisms must be tested quarterly; if a model exhibits unexpected behavior, you must revert within hours. Compliance logging should generate tamper-evident audit records for every training run, deployment event, and PHI access, shipped to an immutable SIEM.

2. HIPAA-Eligible Cloud Platforms

All three major cloud providers offer BAA coverage for AI/ML services, but BAA coverage is not automatic compliance. You must use only covered services, configure them correctly, and enforce a VPC-first architecture with private endpoints for all service-to-service communication.

Common Pitfalls to Avoid

These are real mistakes made repeatedly in healthcare AI applications, each entirely preventable with the right architecture discipline.

Critical Mishaps

-

Sending PHI to non-HIPAA-eligible APIs: Integrating a clinical chatbot with a public LLM without verifying BAA coverage. Developer intent is irrelevant; the transmission is the violation.

-

Training on unencrypted PHI: Labeled clinical datasets stored in unencrypted S3 buckets or on local developer workstations.

-

No audit trails on PHI access: ML pipelines running without tamper-evident logs; inability to demonstrate access records during a compliance investigation.

Serious Mishaps

-

DICOM metadata not stripped: Medical images carry patient identifiers in file metadata that survive format conversion. Automated de-identification must be applied before any imaging dataset is used for training.

-

No BAA before vendor onboarding: Piloting AI vendor tools with real patient data before legal agreements are in place. Procurement review must precede technical integration.

The Future of HIPAA-Compliant AI

The regulatory landscape is not static. The FDA is developing guidance for AI/ML-based Software as a Medical Device. US federal AI regulation, likely addressing algorithmic transparency, mandatory bias auditing, and risk classification, appears increasingly probable. Three technology trends are reshaping what compliance looks like in practice.

Build It Right From Day One

Healthcare AI is not an engineering problem with a compliance wrapper, it is a compliance problem that requires exceptional engineering. Every design decision has regulatory implications.

The organizations that will succeed are those that treat privacy, security, and governance as core engineering requirements, enforced at every layer, audited continuously, and embedded in team culture from the first sprint. The technology is ready. The regulatory framework exists. What remains is the discipline to build it right.